Deep Learning Based Normalization

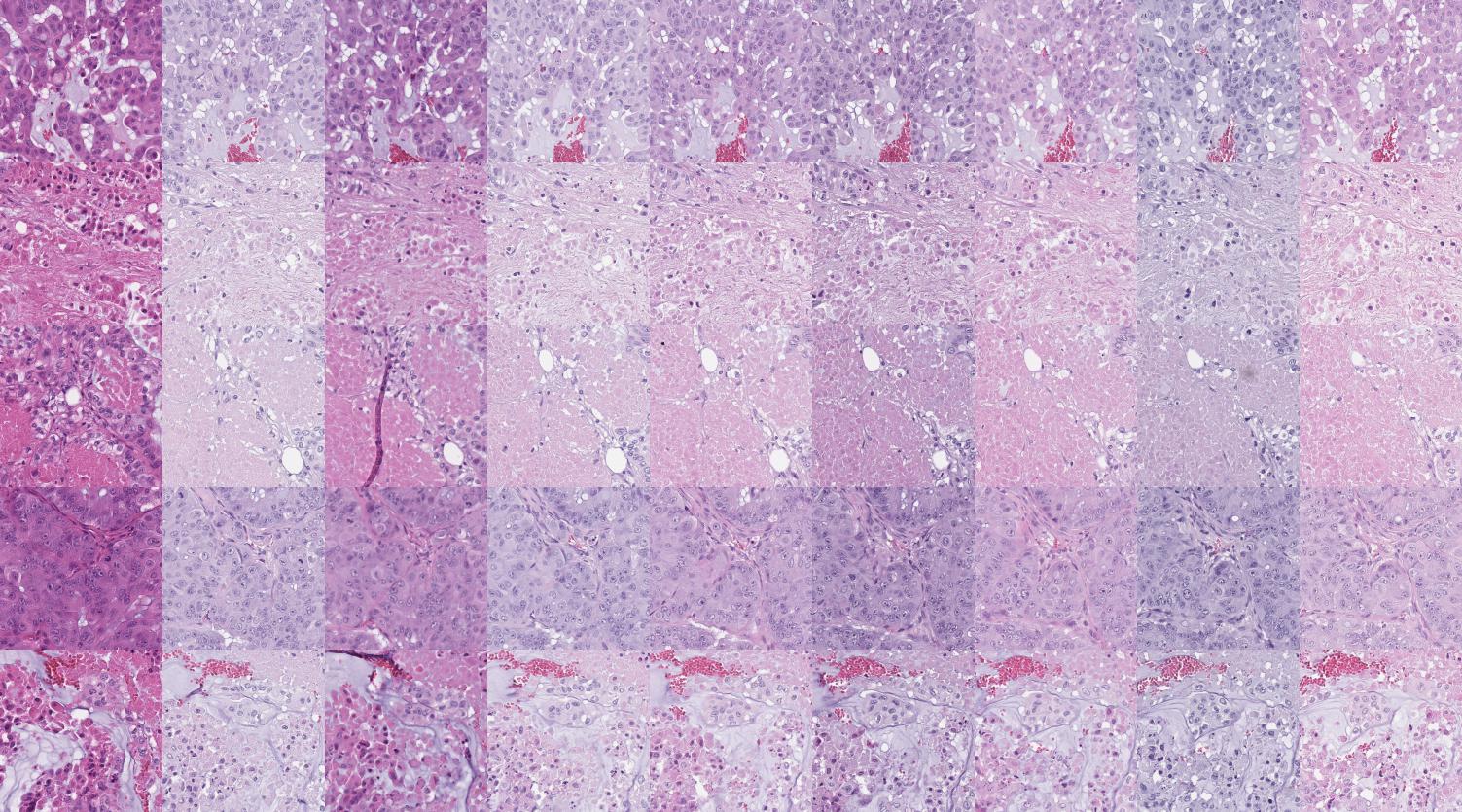

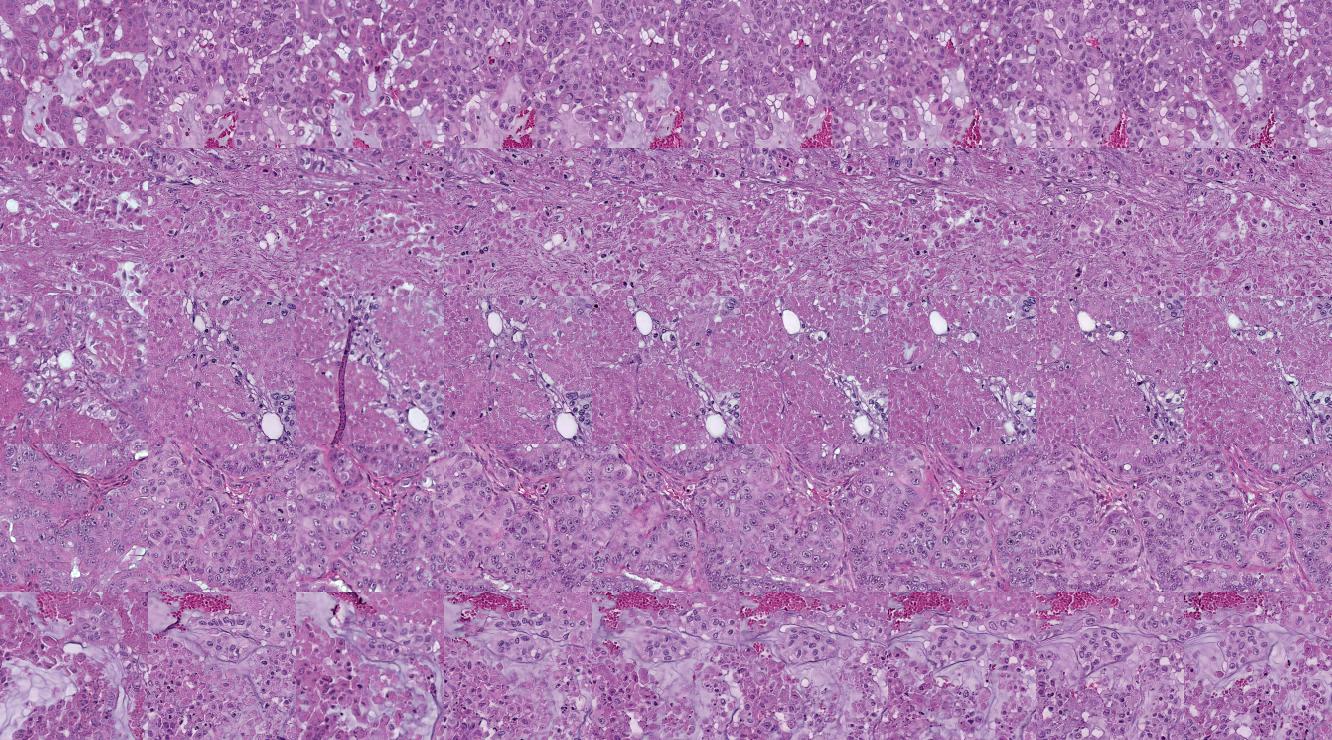

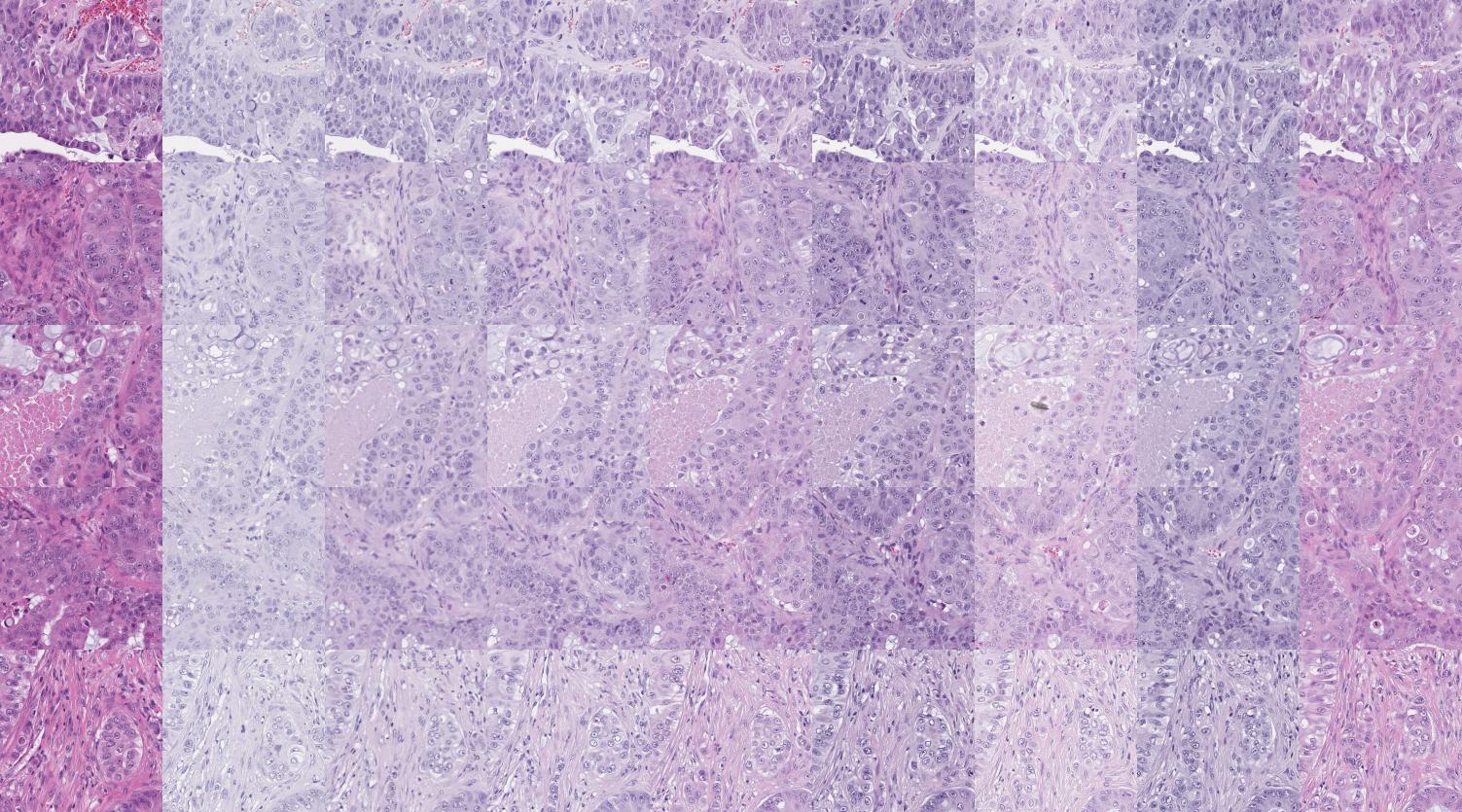

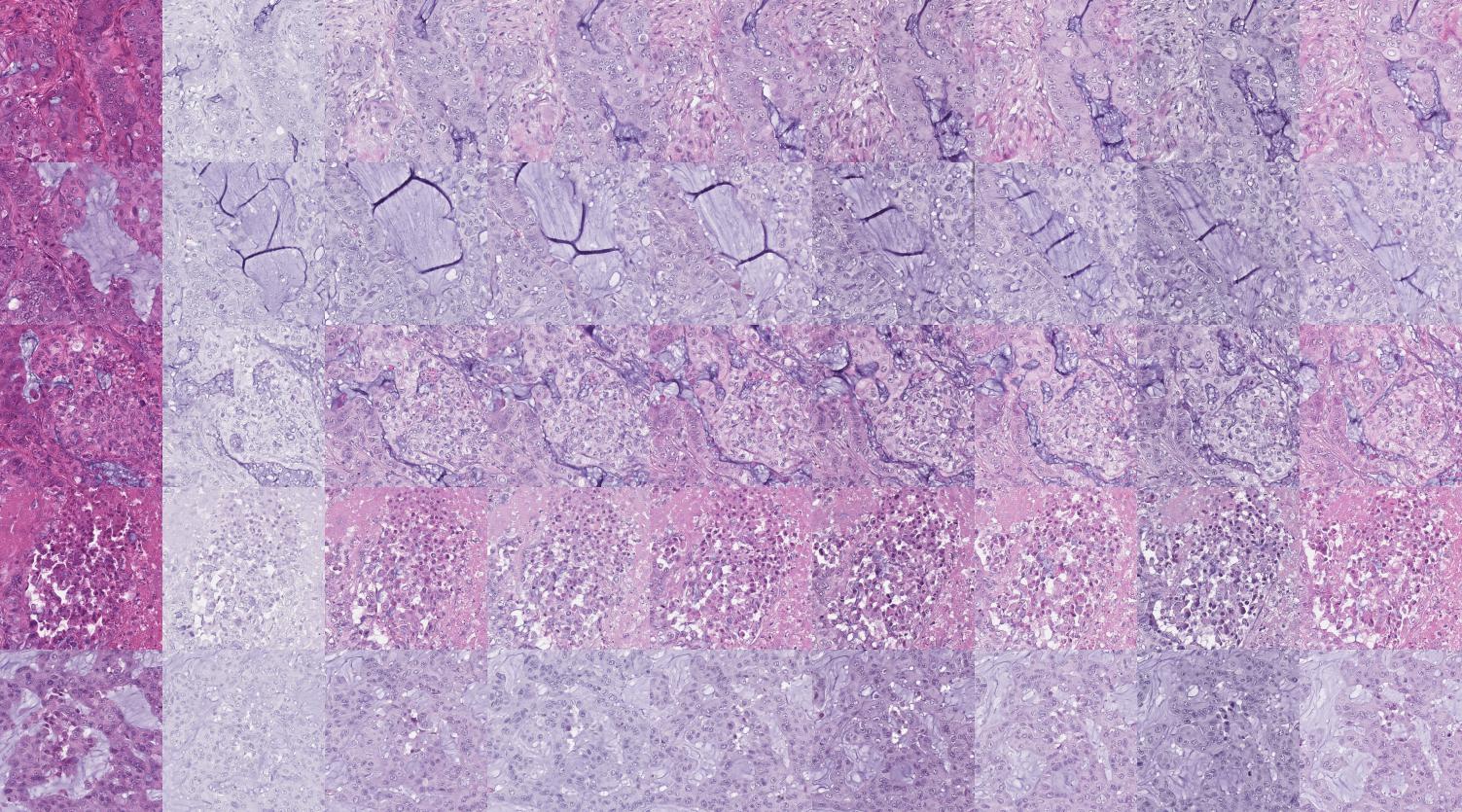

We propose a novel network module called Feature Aware Normalization that can be incoporated into existing architectures. The module combines advantages of LSTM units and Batch Normalization. Scale and shift parameters are calculated on the fly using a pre-trained (and possibly finetuned) deep network. Retraining of as little as 30.000 parameters is required to adapt the algorithm to your dataset.